LS8 - Software Testing

Software Testing (? days)

Software Testing

Dynamic Testing

Coverage Collection

- (up until coverage granularity spectrum for quiz)

Directed Greybox Fuzzing

Fuzz Testing - Sanitizers

Symbolic Execution - BG: SAT and SMT solvers

Misc. Testing Topics

Mock Testing

Future Directions and Research

Slides

Summary

Fuzzer Summary:

- Fuzzing is an effective testing mechanism

- Unlike other testing approaches it can find new inputs that cause program misbehavior

- Mutation and coverage are important for generating effective fuzzers

- Directed greybox fuzzers (DGF) can target specific parts of the codebase

- Sanitizer provide additional information needed to find silent UB bugs

Symbolic Execution

- Symbolic execution explores paths systematically using symbolic inputs + SMT solvers

- Path constraints define equivalence classes - one test input per class suffices

- KLEE: practical symbolic execution on LLVM bitcode

- Challenges: path explosion, loops, environment modeling, solver limitations

Software Testing

Process of finding bugs and vulnerabilities in software

“Program testing can be used to show the presence of bugs, but never to show their absence”

- E. W. Dijkstra

Types:

- Testing based on dynamic analysis

- Software executed, monitor for assertion failures and other bugs during execution

- Unit testing, integration testing, fuzz testing

- Testing based on static analysis

- Source code analyzed without execution

- Analysis identifies known bug patterns: uninitialized variables, unsafe data flow, null pointer dereferences, etc.

- Dta flow analysis, control flow analysis, abstract interpretation

- Hybrid: Symbolic Execution

Dynamic Testing

For example with JUnit:

- Test individual funcs

- Adds assertions to check it conditions hold

- Test corner-cases manually create

Difficult to cover all code with unit testing (LOTS and LOTS of code, Linux Kernel ~30mil) - automated testing is a sub-field of automated software engineering

Silent Failures:

- As opposed to ‘loud’ bugs that cause crashes/assertion failures, some bugs are silent

Detecting silent (UB) bugs requires both, exercising the buggy code with the right inputs AND an oracle to identify the buggy execution

Memory safety:

- Languages like C lack memory-safety

- Buffer-overflow

- Use-after-free

- Double-free

- None of the above bugs necessarily crash the program

Fuzz Testing

Goal: Find app inputs that reveal bugs

- Test the entire app instead of individual functions

- General inputs randomly until an error is uncovered

Bugs revealed:

- Incorrect argument validation, incorrect type casting

- Mem bugs (overflows, leaks, …)

- Logic errors through assertion failures

- Applications written in any language can be fuzzed

Blackbox fuzzing

- Simple loop invoking app with new inputs

- No internal visibility into the app

Pros: - Simple, generating random inputs couldnt be easier

Cons: - Random inputs are not good inputs

- IE imagine trying to create a SQL command with random inputs, it would just be rejected without even executing

Mutation fuzzer

Seeds

Start with valid inputs as ‘seeds’

Mutate them based on some ‘strategy’

- Shuffle bytes, erase bytes, insert byte, change bytes

Pros: - Simple to implement

- Good for binary inputs and streams (JPEG file encoder/decoder)

Cons: - Generates many invalid inputs for applications that expect structured inputs

Dictionaries:

- Provide a dict of ‘keywords’ (like SQL: CREATE/DELETE… or HTML tags)

- Mutate input by replacing/adding a chosen keyword from this dictionary

Pros: - Still simple

- Works reasonably well for structured input

Cons: - Still generates many invalid inputs

Grammar-aware fuzzing

- Define the input format as a CFG (BNF rules)

- Generate inputs by randomly expanding production rules, will only generate syntactically valid inputs

- (can imagine it working for SQL/HTML/etc.)

Pros:

- (can imagine it working for SQL/HTML/etc.)

- Always syntactically valid, tests deeper code

- Can weight rules to target certain features

Cons: - Must write/maintain a grammar for each input format

- Only generates valid inputs, doesnt test invalid inputs

- Generating valid inputs is expensive

Fuzzer Success Factors

-

Fuzzer throughput

-

Percentage of valid inputs generated by the mutator

- Obvious tradeoff between the two (generating valid input is costly, but more often effective)

-

Also, test ‘depth’ and code coverage

-

‘Most promising’ fuzz inputs - simple heuristic is code coverage

- Amount of code/execution paths explored durign testing

-

Input seeds that increase coverage are ‘more promising’

- High coverage = high bug-finding potential

- Almost an RL / evolutionary algorithm?

Coverage guided Fuzzing

Record coverage

Prioritize input seeds which give higher coverage

Gradually uncover all program paths

Also known as ‘greybox fuzzing’

AFL

American Fuzzy lop (AFL)

Fuzzer for C/C++ applications

Coverage Collection

Control flow graph (CFG)

- One possible representation of program execution

CFG consists of basic blocks (BBs):

- e.g. - Entry (of a func), if statements, variable updated, exit, etc.

During fuzzing, if new path is discovered that expands CFG, it considers the input “interesting” - Fuzzer records at the BB level

Compilers

Many automated software engineering techniques, including fuzzing, leverage the compiler

afl-gcc is a wrapper around gcc

- It gets inserted into the compilation process

- Adds a ‘coverage collector’ into the generated binary

Records the code coverage generated by taking certain edges in the CFG

Path Coverage

Track the exact sqeuence of branches taken

- Same branches, different order = different path

- Bugs might depend on the exact path

However, leads to path explosion

- N branches has up to 2^N possible paths

- Loops too, every iteration is a new path

Coverage Granularity Spectrum

- Branch/edge coverage: which branches were taken (AFL)

- Value profile: how close comparisons are to being satisfied (libFuzzer)

- Path coverage: exact sequence of branches (most precise, but exponential complexity)

Weigh fine grained coverage vs performance overhead

- Depends on application

Directed Greybox Fuzzing (DGF)

Greybox fuzzing can’t be directed, works autonomously

Directed fuzzing has many applications

- Path testing: reach change statements

- Crash reproduction: reproduce reported bugs

- Sensitive functions and APIs

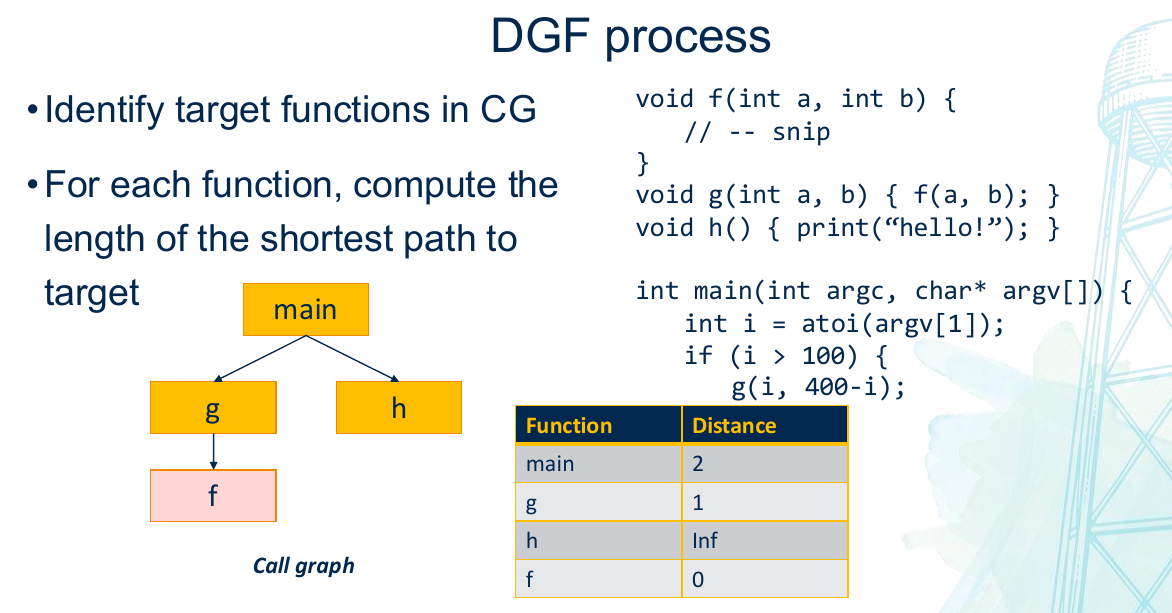

DGF Compiler Instrumentation

Extract call graph (CG) and control-flow graph (CFGs)

- Compute distance to target locations

- Instrument program to aggregate distance

CG - Directed graph where:

- Nodes represent functions

- Edges represent calls (edge from A to B means function A calls B)

DGF - Directed Greybox Fuzzing:

- Identify target functions

- For each function compute the shortest path to target

- For each run, fuzzer compares the BBs executed for the shortest distane to the target

- Keep seeds with lower distance

- Discard seeds with higher distance

- Executions progress towards a target

Fuzz Testing - Sanitizers

Memory safety bugs: Fuzzers excute program with random inputs and look for crashes, but bugs may or may not crash program

- Undefined Behavior (UB)

Sanitizers

Sanitizers - Oracles for memory safety bugs

- Adds additional compiler instrumentation to record object metadata

- Turn silent UBs to crashes

- Popular options

- Address Sanitizer (ASAN) - can detect buffer overflow

- Leak Sanitizer (LSAN) - can detect memory leak

- Thread Sanitizer (TSAN) - can detect data races

- SIGNIFICANTLY slows down the application, but can detect bugs

Sanitizers - Insert additional instrumentation

- Implemented as an additional compiler pass in

afl-clang

Address Sanitizer

Add code to:

- Record size of heap buffers

- Check if an out-of-bounds access occurs

Option 1:

- Maintain metadata for each object

- e.g. a hashmap: {obj_addr: len}

- Instrument heap allocation with hashmap insterion

- Instrument pointer dereference with hashmap lookup

- Very expensive

ASAN is a sanitizer used with popular fuzzers

- Insert “red/poison zones” around memory

- Updates to red zones indicate buffer overflow

- Shadow memory

- Record metadata about red and accessible zones

- Each byte in shadow memory corresponds to 8 bytes in app

- 0x00 (valid); 0xFF (poisoned range); 0x01-0x07 (partially poisoned)

- Compiler adds instruction before memory access to check if shadow memory byte is poisoned

- Checking shadow memory is very fast (know exactly where to look, and what to check once there)

Fuzzer success factors:

- Fuzzer throughput (exec/s)

- Percentage of valid inputs generated by the mutator

- Type of coverage recorded

- Sanitizer vs. no sanitizer

- Ideally, want a high percentage of valid inputs + fine-grained code and data coverage + sanitizers

- Unfortunately reduces throughput

- Tradeoff: finding the right balance for your use case!

Symbolic Execution

Fuzzer limitations:

- Fuzzing generates inputs randomly - effective but incomplete

- Struggles with:

- Magic constants (

x==0xDEADBEEF) - Difficult to hit randomly - Deep paths - Reaching code guarded by many conditions

- Checksums and hash comparisons - random bytes rarely pass validation

- Magic constants (

Can we systematically generate inputs that comprehensively explores different program paths

Background - SAT and SMT solvers

SAT - Given a formula in CNF, find a satisfying assignment

- SAT is NP-complete, worst case exponential

- SAT solvers are foundation for SMT solvers

SMT - Generalizes SAT, boolean variables are replaced by predicates over richer theories - Input: First order formula over background theories

- Output: Is the formula satisfiable? If so provide assignments

Common theories in program analysis: - Arithmetic - linear and nonlinear integer arithmetic

- Arrays - read/write operations on arrays

- Bit-vectors - fixed width binary arithmetic (models machine integers exactly)

- Uninterpreted functions - function equality. f(a) = f(b) if a=b

Example SMT: - examples of satisfying (x,y):

- (8,1), (6,2), (4,3)

- examples of satisfying (x,y):

- Saying:

- write(A, 3, 42) -> A’

- A’[x] = A[x] where x != 3

- A’[x] = 42 where x == 3

- write(A, 3, 42) -> A’

- Satisfiable: i=3

- Saying:

Popular SMT solvers:

- Z3, STP, and more

Symbolic Execution

Execute with symbolic inputs instead of concrete values:

- x=5 turns into x=

(symbolic var)

As execution proceeds

- Variables hold symbolic expressions

- At each branch, the engine forks into two paths

- Each path accumulates a path constraint

Workflow

- Mark inputs as symbolic

- Engine executes symbolically

- At branch: query solver

- Fork into feasible paths

- At path end: solve constraint

- Solver returns concrete input (test case)

Implementations: KLEE, S2E

| Concrete Execution | Symbolic Execution | |

|---|---|---|

| Variables | Concrete (x=5, y=3) | Symbolic (x=a, y=b, z=2b) |

| Paths | One path through program | Explores all paths |

| Tests | One input at a time | Each path = equivalence class of inputs |

| Speed | Fast - native execution | Slower - needs SMT solver queries |

| Types | Fuzzing, unit testing | Symbolic execution known as whitebox testing |

Symbolic execution finding bugs

For each dangerous operation: insert a check and fork

- Division: Fork on divisor == 0

- Array access: Fork on index < 0 or index >= length

- Pointer dereference: Fork on pointer == NULL

- Assertion(p): Fork on

- Integer overflow: Fork on result exceeding type bounds

If the fork is satisfiable: - Solver provides a concrete bug-triggering input

Else: - Proves bug can’t happen on this path

Challenges:

- Loops:

- Loops with a symbolic limit leads to path explosion

- A DFS approach will get infinitely stuck in a loop due to path explosion

- One solution: Loop with a concrete limit

- Loops with a symbolic limit leads to path explosion

- Pointers

- A symbolic pointer can represent ALL variables in the application

- Solution: Use other static analyses techniques (like alias analysis) to precompute valid pointer targets

Challenges Revisited:

- Path explosion (N branches -> 2^N paths)

- Loops and recursion (can produce infinite trees)

- Heap and pointer modeling; symbolic addresses, aliasing analysis

- SMT solver limitations; floating point are hard/undecidable

- Environment modeling - system calls, file I/O, network, concurrency

Symbolic Exec works best on: - Smaller, well bounded programs or functions

- Programs with limited use of external libraries

- Unit-level testing rather than whole-system testing

Misc Testing Topics

Continuous Integration (CI)

Code changes are automatically built, tested, and integrated into a shared repo multiple times a day

Process:

- Developer commits code

- CI pipeline trigger

- Project build

- Run automated tests

- Integrated into GitHub and Gitlab

- Github Actions

Containers for CI:

- Provide uniform build system for continuous integration (No $PATH vars, missing binaries etc.)

- Automate deployment via continuous deployment (CD)

- Solves work-on-my-machine issues

Github Actions

- Triggered when an even occurs

- Push event, pull request open

- Workflow contains

- One or more jobs

- Each job has its own runner on which it is executed (typically a container)

- Each job has one or more steps

- A step is either a script, command, or another action (recursion)

- Github Action specified in a YAML file

- defined in

.github/workflows/[NAME].ymlfile - define triggers

- specify jobs and steps (e.g. build, test)

- defined in

Mock Testing

Simulate the behavior of real objects in a controlled way

Why?

- Isolate components for unit testing

- External systems (APIs, Databases)

- Complex or time-consuming operations

e.g. mock a sendEmail func without actually emailing a client

Examples:

- Mockito

- EASYMOCK